[ad_1]

As machine studying (ML) goes mainstream and good points wider adoption, ML-powered inference functions have gotten more and more widespread to unravel a spread of complicated enterprise issues. The answer to those complicated enterprise issues typically requires utilizing a number of ML fashions and steps. This submit exhibits you tips on how to construct and host an ML utility with customized containers on Amazon SageMaker.

Amazon SageMaker provides built-in algorithms and pre-built SageMaker docker photographs for mannequin deployment. However, if these don’t suit your wants, you possibly can carry your individual containers (BYOC) for internet hosting on Amazon SageMaker.

There are a number of use instances the place customers may must BYOC for internet hosting on Amazon SageMaker.

Customized ML frameworks or libraries: In the event you plan on utilizing a ML framework or libraries that aren’t supported by Amazon SageMaker built-in algorithms or pre-built containers, you then’ll must create a customized container.

Specialised fashions: For sure domains or industries, chances are you’ll require particular mannequin architectures or tailor-made preprocessing steps that aren’t obtainable in built-in Amazon SageMaker choices.

Proprietary algorithms: In the event you’ve developed your individual proprietary algorithms inhouse, you then’ll want a customized container to deploy them on Amazon SageMaker.

Complicated inference pipelines: In case your ML inference workflow includes customized enterprise logic — a collection of complicated steps that have to be executed in a specific order — then BYOC may also help you handle and orchestrate these steps extra effectively.

Resolution overview

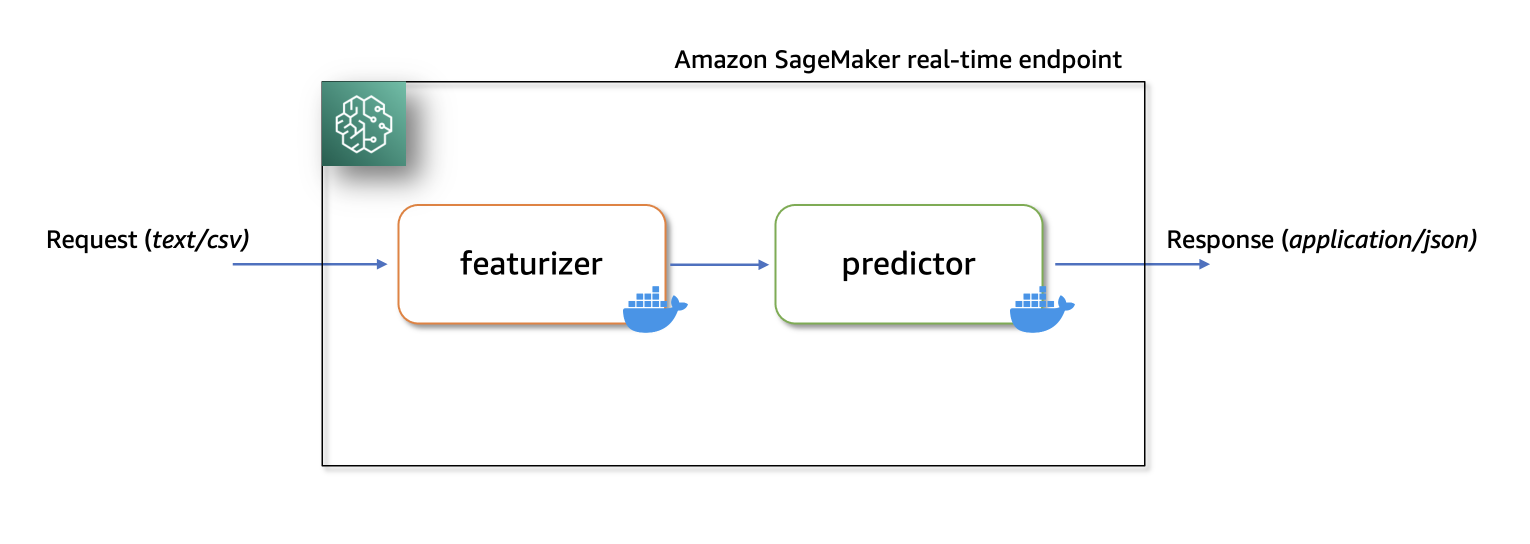

On this resolution, we present tips on how to host a ML serial inference utility on Amazon SageMaker with real-time endpoints utilizing two customized inference containers with newest scikit-learn and xgboost packages.

The primary container makes use of a scikit-learn mannequin to remodel uncooked information into featurized columns. It applies StandardScaler for numerical columns and OneHotEncoder to categorical ones.

The second container hosts a pretrained XGboost mannequin (i.e., predictor). The predictor mannequin accepts the featurized enter and outputs predictions.

Lastly, we deploy the featurizer and predictor in a serial-inference pipeline to an Amazon SageMaker real-time endpoint.

Listed below are few completely different concerns as to why chances are you’ll wish to have separate containers inside your inference utility.

Decoupling – Numerous steps of the pipeline have a clearly outlined function and have to be run on separate containers because of the underlying dependencies concerned. This additionally helps preserve the pipeline effectively structured.

Frameworks – Numerous steps of the pipeline use particular fit-for-purpose frameworks (akin to scikit or Spark ML) and subsequently have to be run on separate containers.

Useful resource isolation – Numerous steps of the pipeline have various useful resource consumption necessities and subsequently have to be run on separate containers for extra flexibility and management.

Upkeep and upgrades – From an operational standpoint, this promotes purposeful isolation and you may proceed to improve or modify particular person steps rather more simply, with out affecting different fashions.

Moreover, native construct of the person containers helps within the iterative strategy of growth and testing with favourite instruments and Built-in Growth Environments (IDEs). As soon as the containers are prepared, you should use deploy them to the AWS cloud for inference utilizing Amazon SageMaker endpoints.

Full implementation, together with code snippets, is obtainable on this Github repository right here.

Conditions

As we check these customized containers domestically first, we’ll want docker desktop put in in your native pc. You have to be acquainted with constructing docker containers.

You’ll additionally want an AWS account with entry to Amazon SageMaker, Amazon ECR and Amazon S3 to check this utility end-to-end.

Guarantee you have got the most recent model of Boto3 and the Amazon SageMaker Python packages put in:

Resolution Walkthrough

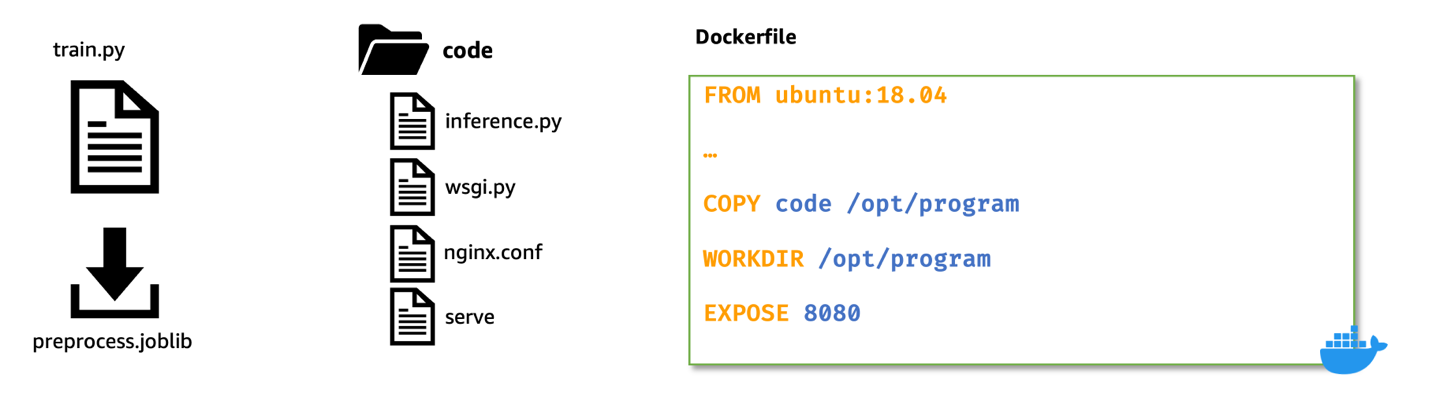

Construct customized featurizer container

To construct the primary container, the featurizer container, we prepare a scikit-learn mannequin to course of uncooked options within the abalone dataset. The preprocessing script makes use of SimpleImputer for dealing with lacking values, StandardScaler for normalizing numerical columns, and OneHotEncoder for reworking categorical columns. After becoming the transformer, we save the mannequin in joblib format. We then compress and add this saved mannequin artifact to an Amazon Easy Storage Service (Amazon S3) bucket.

Right here’s a pattern code snippet that demonstrates this. Discuss with featurizer.ipynb for full implementation:

Subsequent, to create a customized inference container for the featurizer mannequin, we construct a Docker picture with nginx, gunicorn, flask packages, together with different required dependencies for the featurizer mannequin.

Nginx, gunicorn and the Flask app will function the mannequin serving stack on Amazon SageMaker real-time endpoints.

When bringing customized containers for internet hosting on Amazon SageMaker, we have to make sure that the inference script performs the next duties after being launched contained in the container:

Mannequin loading: Inference script (preprocessing.py) ought to discuss with /decide/ml/mannequin listing to load the mannequin within the container. Mannequin artifacts in Amazon S3 will likely be downloaded and mounted onto the container on the path /decide/ml/mannequin.

Setting variables: To move customized atmosphere variables to the container, you should specify them through the Mannequin creation step or throughout Endpoint creation from a coaching job.

API necessities: The Inference script should implement each /ping and /invocations routes as a Flask utility. The /ping API is used for well being checks, whereas the /invocations API handles inference requests.

Logging: Output logs within the inference script have to be written to plain output (stdout) and normal error (stderr) streams. These logs are then streamed to Amazon CloudWatch by Amazon SageMaker.

Right here’s a snippet from preprocessing.py that present the implementation of /ping and /invocations.

Discuss with preprocessing.py beneath the featurizer folder for full implementation.

Construct Docker picture with featurizer and mannequin serving stack

Let’s now construct a Dockerfile utilizing a customized base picture and set up required dependencies.

For this, we use python:3.9-slim-buster as the bottom picture. You may change this some other base picture related to your use case.

We then copy the nginx configuration, gunicorn’s net server gateway file, and the inference script to the container. We additionally create a python script referred to as serve that launches nginx and gunicorn processes within the background and units the inference script (i.e., preprocessing.py Flask utility) because the entry level for the container.

Right here’s a snippet of the Dockerfile for internet hosting the featurizer mannequin. For full implementation discuss with Dockerfile beneath featurizer folder.

Take a look at customized inference picture with featurizer domestically

Now, construct and check the customized inference container with featurizer domestically, utilizing Amazon SageMaker native mode. Native mode is ideal for testing your processing, coaching, and inference scripts with out launching any jobs on Amazon SageMaker. After confirming the outcomes of your native exams, you possibly can simply adapt the coaching and inference scripts for deployment on Amazon SageMaker with minimal adjustments.

To check the featurizer customized picture domestically, first construct the picture utilizing the beforehand outlined Dockerfile. Then, launch a container by mounting the listing containing the featurizer mannequin (preprocess.joblib) to the /decide/ml/mannequin listing contained in the container. Moreover, map port 8080 from container to the host.

As soon as launched, you possibly can ship inference requests to http://localhost:8080/invocations.

To construct and launch the container, open a terminal and run the next instructions.

Observe that it’s best to change the <IMAGE_NAME>, as proven within the following code, with the picture title of your container.

The next command additionally assumes that the educated scikit-learn mannequin (preprocess.joblib) is current beneath a listing referred to as fashions.

After the container is up and working, we are able to check each the /ping and /invocations routes utilizing curl instructions.

Run the beneath instructions from a terminal

When uncooked (untransformed) information is distributed to http://localhost:8080/invocations, the endpoint responds with reworked information.

It’s best to see response one thing just like the next:

We now terminate the working container, after which tag and push the native customized picture to a personal Amazon Elastic Container Registry (Amazon ECR) repository.

See the next instructions to login to Amazon ECR, which tags the native picture with full Amazon ECR picture path after which push the picture to Amazon ECR. Make sure you change area and account variables to match your atmosphere.

Discuss with create a repository and push a picture to Amazon ECR AWS Command Line Interface (AWS CLI) instructions for extra info.

Elective step

Optionally, you might carry out a dwell check by deploying the featurizer mannequin to a real-time endpoint with the customized docker picture in Amazon ECR. Discuss with featurizer.ipynb pocket book for full implementation of buiding, testing, and pushing the customized picture to Amazon ECR.

Amazon SageMaker initializes the inference endpoint and copies the mannequin artifacts to the /decide/ml/mannequin listing contained in the container. See How SageMaker Masses your Mannequin artifacts.

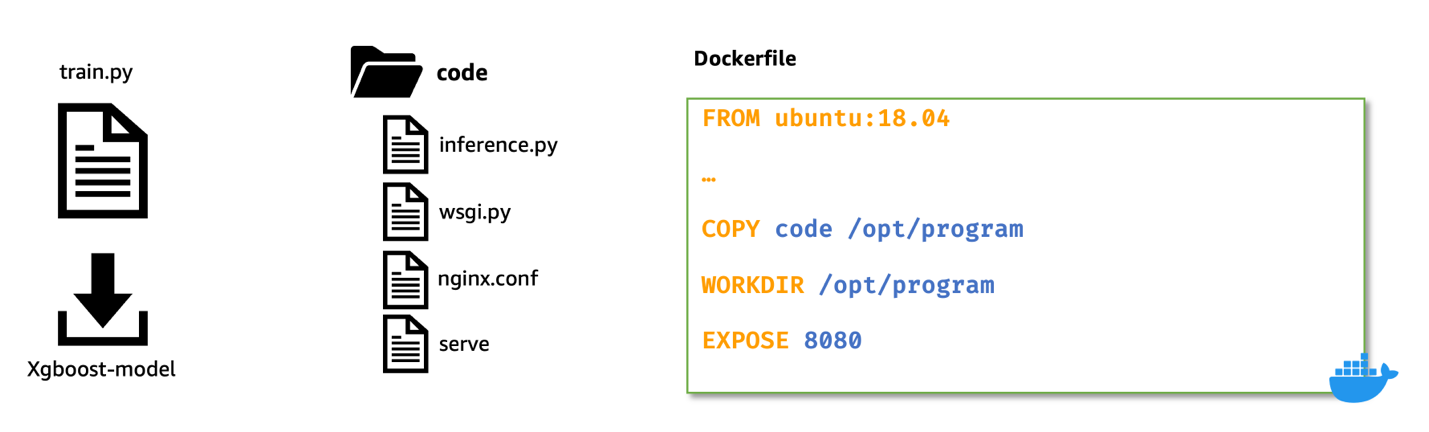

Construct customized XGBoost predictor container

For constructing the XGBoost inference container we observe related steps as we did whereas constructing the picture for featurizer container:

Obtain pre-trained XGBoost mannequin from Amazon S3.

Create the inference.py script that masses the pretrained XGBoost mannequin, converts the reworked enter information obtained from featurizer, and converts to XGBoost.DMatrix format, runs predict on the booster, and returns predictions in json format.

Scripts and configuration information that kind the mannequin serving stack (i.e., nginx.conf, wsgi.py, and serve stay the identical and desires no modification.

We use Ubuntu:18.04 as the bottom picture for the Dockerfile. This isn’t a prerequisite. We use the ubuntu base picture to show that containers might be constructed with any base picture.

The steps for constructing the client docker picture, testing the picture domestically, and pushing the examined picture to Amazon ECR stay the identical as earlier than.

For brevity, because the steps are related proven beforehand; nonetheless, we solely present the modified coding within the following.

First, the inference.py script. Right here’s a snippet that present the implementation of /ping and /invocations. Discuss with inference.py beneath the predictor folder for full implementation of this file.

Right here’s a snippet of the Dockerfile for internet hosting the predictor mannequin. For full implementation discuss with Dockerfile beneath predictor folder.

We then proceed to construct, check, and push this tradition predictor picture to a personal repository in Amazon ECR. Discuss with predictor.ipynb pocket book for full implementation of constructing, testing and pushing the customized picture to Amazon ECR.

Deploy serial inference pipeline

After we now have examined each the featurizer and predictor photographs and have pushed them to Amazon ECR, we now add our mannequin artifacts to an Amazon S3 bucket.

Then, we create two mannequin objects: one for the featurizer (i.e., preprocess.joblib) and different for the predictor (i.e., xgboost-model) by specifying the customized picture uri we constructed earlier.

Right here’s a snippet that exhibits that. Discuss with serial-inference-pipeline.ipynb for full implementation.

Now, to deploy these containers in a serial style, we first create a PipelineModel object and move the featurizer mannequin and the predictor mannequin to a python listing object in the identical order.

Then, we name the .deploy() technique on the PipelineModel specifying the occasion kind and occasion rely.

At this stage, Amazon SageMaker deploys the serial inference pipeline to a real-time endpoint. We look ahead to the endpoint to be InService.

We are able to now check the endpoint by sending some inference requests to this dwell endpoint.

Discuss with serial-inference-pipeline.ipynb for full implementation.

Clear up

After you’re executed testing, please observe the directions within the cleanup part of the pocket book to delete the sources provisioned on this submit to keep away from pointless expenses. Discuss with Amazon SageMaker Pricing for particulars on the price of the inference situations.

Conclusion

On this submit, I confirmed how we are able to construct and deploy a serial ML inference utility utilizing customized inference containers to real-time endpoints on Amazon SageMaker.

This resolution demonstrates how clients can carry their very own customized containers for internet hosting on Amazon SageMaker in a cost-efficient method. With BYOC possibility, clients can rapidly construct and adapt their ML functions to be deployed on to Amazon SageMaker.

We encourage you to do this resolution with a dataset related to your online business Key Efficiency Indicators (KPIs). You may discuss with all the resolution on this GitHub repository.

References

Concerning the Writer

Praveen Chamarthi is a Senior AI/ML Specialist with Amazon Net Companies. He’s captivated with AI/ML and all issues AWS. He helps clients throughout the Americas to scale, innovate, and function ML workloads effectively on AWS. In his spare time, Praveen likes to learn and enjoys sci-fi motion pictures.

Praveen Chamarthi is a Senior AI/ML Specialist with Amazon Net Companies. He’s captivated with AI/ML and all issues AWS. He helps clients throughout the Americas to scale, innovate, and function ML workloads effectively on AWS. In his spare time, Praveen likes to learn and enjoys sci-fi motion pictures.

[ad_2]

Source link

Wow, marvelous weblog layout! How lengthy have you ever been running a blog

for? you make blogging look easy. The total look of your site is fantastic, as well as the

content material! You can see similar here sklep internetowy